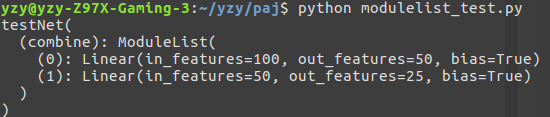

If you observe the above code, it is similar to the huge non linear function we have constructed using neural networks. X4 = torch.tensor(4, requires_grad=True, dtype=torch.float16) X3 = torch.tensor(1, requires_grad=True, dtype=torch.float16) X2 = torch.tensor(3, requires_grad=True, dtype=torch.float16) Let's imagine a model something like: x1 = torch.tensor(2, requires_grad=True, dtype=torch.float16) (For simple explanation imagine a linear regression model which is a function in the lines of y = mx+b). Let's imagine a model is a large mathematical/non linear function. Print(f'Result: y = x^3')Īs it may seem it's not connected but loss is actually connected to the model. # Calling the step function on an Optimizer makes an update to its # Backward pass: compute gradient of the loss with respect to model # accumulated in buffers( i.e, not overwritten) whenever. This is because by default, gradients are # gradients for the variables it will update (which are the learnable # Before the backward pass, use the optimizer object to zero all of the # Forward pass: compute predicted y by passing x to the model. Optimizer = (model.parameters(), lr=learning_rate) # optimizer which Tensors it should update. The first argument to the RMSprop constructor tells the Here we will use RMSprop the optim package contains many other # Use the optim package to define an Optimizer that will update the weights of Loss_fn = torch.nn.MSELoss(reduction='sum') # Use the nn package to define our model and loss function. # Prepare the input tensor (x, x^2, x^3). X = torch.linspace(-math.pi, math.pi, 2000) # Create Tensors to hold input and outputs. How pytorch recognize that loss_2 is not related to model and only loss is related?Ĭonsider a scenario, I would like to have ( model_a or loss_fn_a and optimizer_a) and ( model_b or loss_fn_b and optimizer_b) so I would like to make *_a and *_b isolated from each other import torch I mean, consider I add a new instance of MSELoss like loss_fn_2 = torch.nn.MSELoss(reduction='sum') to the code and exactly do the same loss_2 = loss_fn_2(y_pred, y) and loss_2.backward() But there is no connection between loss_fn and model or loss_fn and optimizer. in the following code we have a model, as we pass model.parameters() to optimizer so optimizer and model are some how connected. I have a specific question about loss.backward(). The following code is just a template, you see the following pattern a lot in AI codes.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed